The battle for inference performance and training is intensifying by the day. SambaNova, Cerebras, and Groq are pushing the limits of token speed with record-breaking performance for Meta’s Llama.

Meanwhile, OpenAI’s o1 is looking to slow down inference to enhance reasoning capabilities, aka “thinking”, by allocating more compute resources to inference, allowing models to interact with external tools for deeper analysis, rather than relying solely on pre-training with large datasets.

This development comes in the backdrop of Oracle launching the world’s first zetascale cloud computing cluster powered by NVIDIA’s Blackwell GPUs. It now offers up to 131,072 NVIDIA B200 GPUs, which is six times more than other cloud hyperscalers like AWS, Azure, and Google Cloud. It delivers 2.4 zettaFLOPs while competitors have only reached the exascale levels.

The need for speed in AI is only getting bigger and better. (see below)

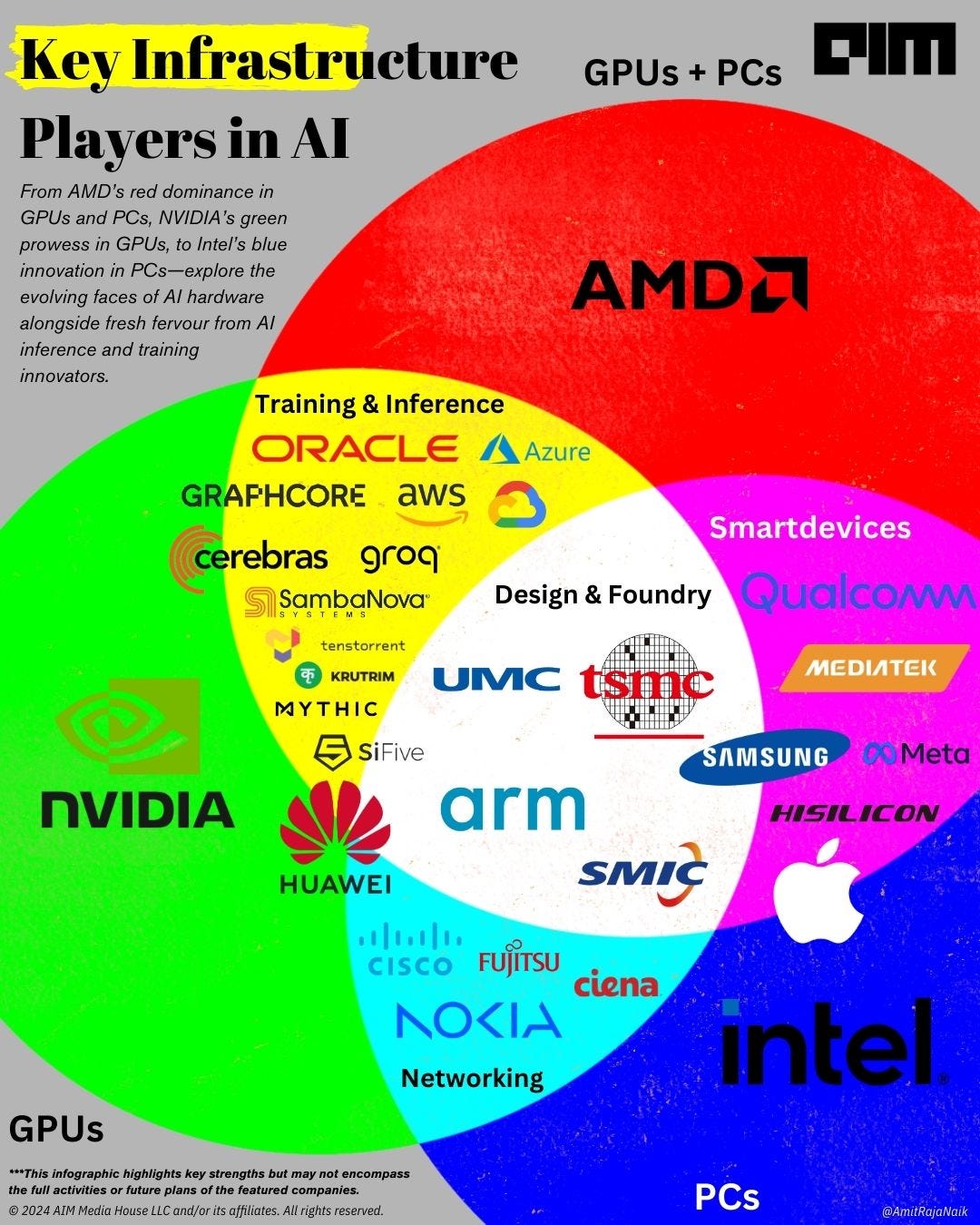

The infographic shows emerging trends in the AI hardware sector. At the forefront, companies like AMD, NVIDIA, and Intel (shown in vibrant RGB colours) continue to drive advancements in GPUs and PCs. They are supported by foundational design and foundry firms such as TSMC, SMIC, UMC, Arm, and SiFive (illustrated in white), whose expertise in chip manufacturing underpins the latest AI innovations.

Keep reading with a 7-day free trial

Subscribe to Sector 6 | The Newsletter of AIM to keep reading this post and get 7 days of free access to the full post archives.